Short Bio

Short Bio I am a Lappan-Phillips Professor of Computing Education in the College of Education and College of Natural Science at Michigan State University. In addition to Ph.D. in Learning, Design, and Technology. I hold bachelors and masters in Electrical Engineering. My research and teaching focus on supporting educators to understand, apply, and critically evaluate the use of computing in K-12 classrooms. |

Contact

Dr. Aman Yadav Professor Educational Psychology and Educational Technology East Lansing, MI 48824, U.S.A email: ayadav (at) msu.edu ph: 517-884-2094 Follow @yadavaman |

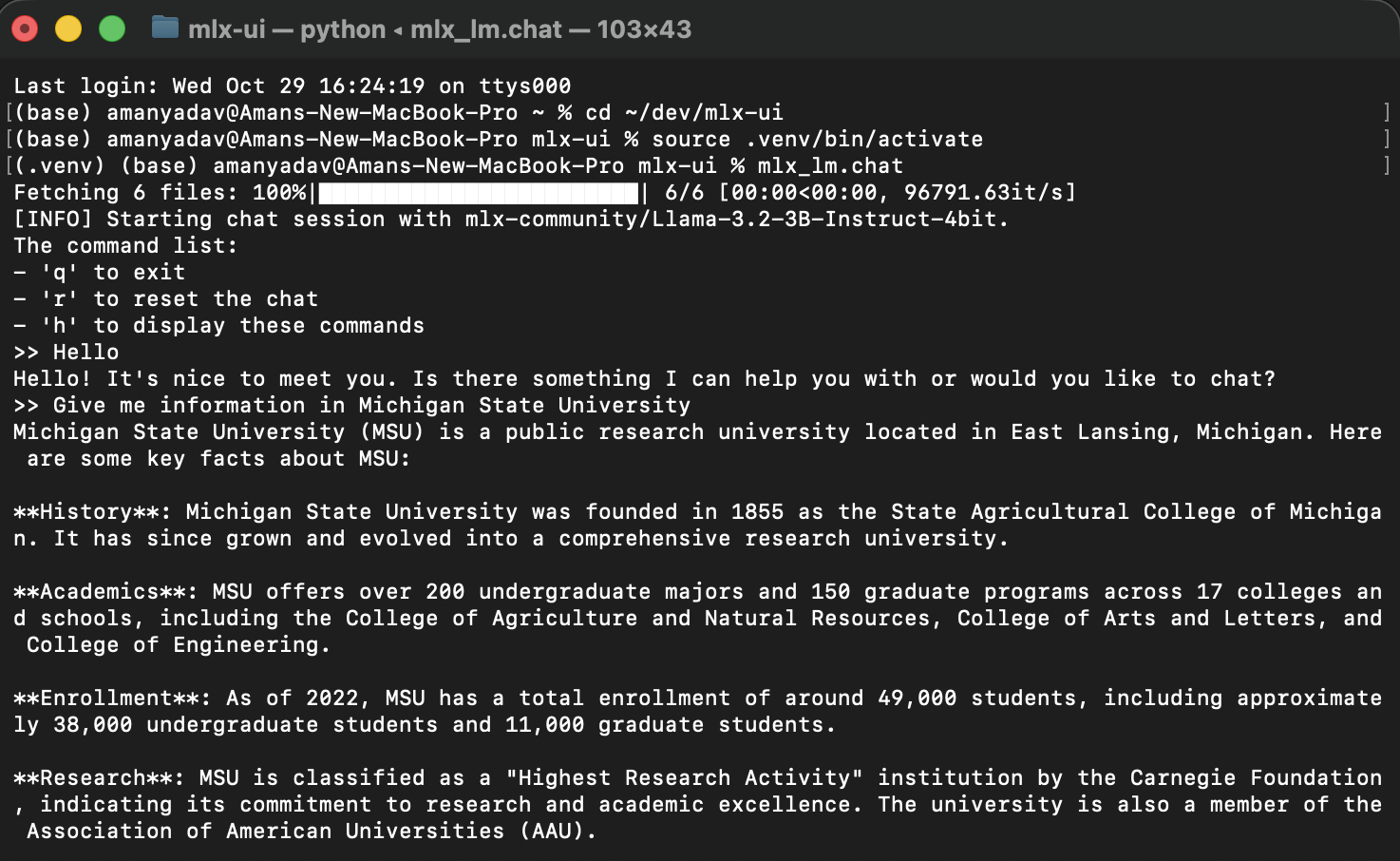

Build a Streamlit WebUI for Your Local MLX Model

In Part 1 of this series, we installed MLX and ran a local large language model (LLM) like Mistral 7B Instruct directly on macOS.Now, let’s take it a step further and build a web-based chat interface using Streamlit — so you can interact with your local model just like ChatGPT, right from your browser. Why Streamlit? Streamlit is a powerful Python…

Local LLM on Apple w/ MLX

Running a large language model (LLM) on your own machine used to be a distant dream — now it’s possible and surprisingly simple thanks to Apple’s MLX framework.MLX is Apple’s machine learning library optimized for Apple Silicon, allowing you to run and fine-tune powerful models locally — without needing a GPU cluster or…

PhD Program in Educational Psychology & Educational Technology at MSU

If you are considering applying to the PhD program in Educational Psychology and Educational Technology (EPET) at Michigan State University to work with me, here are some information you should consider. My work is in computing education research (CER) with a particular focus on preparing teachers on supporting educators to…

Empowering Future Teachers with Generative AI Education

As generative AI tools begin to reshape our world, teachers must be equipped with the knowledge and skills to guide students in understanding and using these technologies responsibly. Our NSF-funded project, Empowering Future Teachers with Generative AI Education, aims to prepare pre-service teachers with a curriculum that highlights both the potential…